요약

QAT는 생성속도 차이는 크게 없어 보임. 사용해봐야 결과 품질을 알 수 있을 듯 함.

MTP는 50% 정도 성능 향상이 되는 듯?

---

QAT

오오 3~4일 전 따끈한 모델!

용량이 3~4GB 정도라 정말 어떨지 궁금하다.

[링크 : https://huggingface.co/unsloth/gemma-4-E4B-it-qat-GGUF]

기존에 테스트 하던건 Q4_K_M 이라 비슷할진 모르겠다.

$ ../../llama-b9553/llama-cli -m gemma-4-E4B-it-qat-UD-Q2_K_XL.gguf -sm none

[ Prompt: 16.8 t/s | Generation: 38.6 t/s ]

[ Prompt: 97.9 t/s | Generation: 41.1 t/s ]

[ Prompt: 196.1 t/s | Generation: 39.9 t/s ] |

$ ../../llama-b9553/llama-cli -m gemma-4-E4B-it-qat-UD-Q4_K_XL.gguf -sm none

[ Prompt: 737.0 t/s | Generation: 62.5 t/s ]

[ Prompt: 238.5 t/s | Generation: 61.4 t/s ]

[ Prompt: 292.3 t/s | Generation: 58.0 t/s ] |

MTP

MTP는 multimodal 처럼 2개의 모델 파일이 필요하구나..

일단은 cuda enable 하고 빌드하려면.. sdk가 문제 없으려나.. 쩝

./build/bin/llama-server \

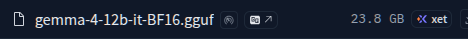

-m gemma-4-12b-it-Q4_K_M.gguf \

--model-draft MTP/gemma-4-12B-it-MTP-Q8_0.gguf \

--spec-type draft-mtp --spec-draft-n-max 4 \

-ngl 999 -fa on

Multi GPU: add --spec-draft-device CUDA0 -sm layer. |

[링크 : https://huggingface.co/unsloth/gemma-4-12b-it-GGUF/blob/main/MTP/README.md]

+

음.. 장렬히 빌드 시도 폭★파 ㅋㅋㅋ

$ cmake -B build -DGGML_CUDA=ON -DCMAKE_CUDA_ARCHITECTURES=61

CMAKE_BUILD_TYPE=Release

-- Warning: ccache not found - consider installing it for faster compilation or disable this warning with GGML_CCACHE=OFF

-- CMAKE_SYSTEM_PROCESSOR: x86_64

-- GGML_SYSTEM_ARCH: x86

-- Including CPU backend

-- x86 detected

-- Adding CPU backend variant ggml-cpu: -march=native

-- Unable to find cublas_v2.h in either "/usr/local/cuda/include" or "/usr/math_libs/include"

-- CUDA Toolkit found

CMake Error at /usr/share/cmake-3.22/Modules/CMakeDetermineCompilerId.cmake:726 (message):

Compiling the CUDA compiler identification source file

"CMakeCUDACompilerId.cu" failed.

Compiler: /usr/local/cuda/bin/nvcc

Build flags:

Id flags: --keep;--keep-dir;tmp;-gencode=arch=compute_61,code=sm_61 -v

The output was:

1

nvcc fatal : Unsupported gpu architecture 'compute_61'

Call Stack (most recent call first):

/usr/share/cmake-3.22/Modules/CMakeDetermineCompilerId.cmake:6 (CMAKE_DETERMINE_COMPILER_ID_BUILD)

/usr/share/cmake-3.22/Modules/CMakeDetermineCompilerId.cmake:48 (__determine_compiler_id_test)

/usr/share/cmake-3.22/Modules/CMakeDetermineCUDACompiler.cmake:298 (CMAKE_DETERMINE_COMPILER_ID)

ggml/src/ggml-cuda/CMakeLists.txt:59 (enable_language)

-- Configuring incomplete, errors occurred!

See also "/home/falinux/src/llama.cpp/build/CMakeFiles/CMakeOutput.log".

See also "/home/falinux/src/llama.cpp/build/CMakeFiles/CMakeError.log". |

+

b9500 으로는 무리인가.. 아니면 vulkan 모델이라 안되는걸까?

| $ ../../llama-b9500/llama-cli -m gemma-4-12b-it-Q4_0.gguf --model-draft gemma-4-12B-it-MTP-Q8_0.gguf --spec-type draft-mtp --spec-draft-n-max 4 -ngl 999 -fa on --verbose |

0.19.888.319 E llama_model_load: error loading model: unknown model architecture: 'gemma4-assistant'

0.19.888.322 E llama_model_load_from_file_impl: failed to load model

0.19.888.324 E srv load_model: failed to load draft model, 'gemma-4-12B-it-MTP-Q8_0.gguf' |

b9953 으로 하니 돌아간다.

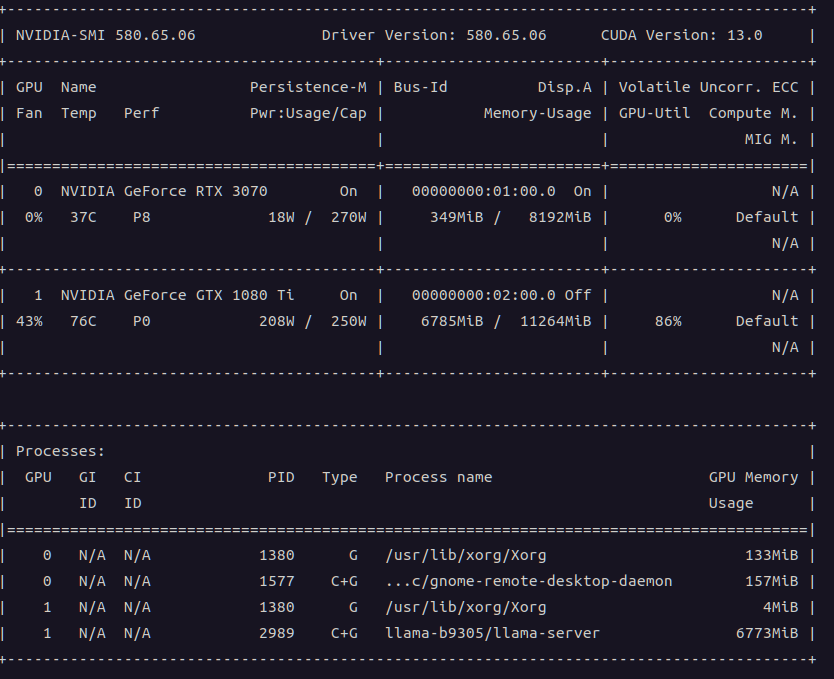

1080 ti 11GB / -sm none

| $ ../../llama-b9553/llama-cli -m gemma-4-12b-it-Q4_0.gguf --model-draft gemma-4-12B-it-MTP-Q8_0.gguf --spec-type draft-mtp --spec-draft-n-max 4 -ngl 999 -fa on -sm none |

Q4_0

[ Prompt: 48.6 t/s | Generation: 42.6 t/s ]

[ Prompt: 231.5 t/s | Generation: 36.6 t/s ]

[ Prompt: 241.1 t/s | Generation: 34.0 t/s ]

UD_Q2_K_XL

[ Prompt: 5.0 t/s | Generation: 21.1 t/s ]

[ Prompt: 80.7 t/s | Generation: 29.2 t/s ]

[ Prompt: 45.0 t/s | Generation: 24.4 t/s ] |

1080 ti 11GB / -sm layer

| $ ../../llama-b9553/llama-cli -m gemma-4-12b-it-Q4_0.gguf --model-draft gemma-4-12B-it-MTP-Q8_0.gguf --spec-type draft-mtp --spec-draft-n-max 4 -ngl 999 -fa on |

Q4_0

[ Prompt: 66.8 t/s | Generation: 28.5 t/s ]

[ Prompt: 126.1 t/s | Generation: 19.3 t/s ]

[ Prompt: 88.2 t/s | Generation: 16.3 t/s ]

UD_Q2_K_XL

[ Prompt: 36.5 t/s | Generation: 24.6 t/s ]

[ Prompt: 32.1 t/s | Generation: 17.1 t/s ]

[ Prompt: 47.3 t/s | Generation: 12.6 t/s ] (한번 터졌음) |

>>>>> 참조용 >>>>>

하드웨어 1080 ti -sm none

gemma-4 12B it Q4_0.gguf Reading Generation 25 tokens 0.9s 27.94 t/s

gemma-4 12B it Q4_0.gguf Reading Generation 255 tokens 8.9s 28.78 t/s

gemma-4 12B it Q4_0.gguf Reading Generation 1,404 tokens 55s 25.45 t/s

gemma-4 12B it UD Q2_K_XL.gguf Reading Generation 29 tokens 1.2s 23.71 t/s

gemma-4 12B it UD Q2_K_XL.gguf Reading Generation 373 tokens 16s 22.28 t/s

gemma-4 12B it UD Q2_K_XL.gguf Reading Generation 806 tokens 37s 21.34 t/s (터짐) |

하드웨어 1080 ti -sm layer

gemma-4 12B it Q4_0.gguf Reading Generation 25 tokens 0.8s 31.04 t/s

gemma-4 12B it Q4_0.gguf Reading Generation 265 tokens 9.0s 29.60 t/s

gemma-4 12B it Q4_0.gguf Reading Generation 1,340 tokens 54s 24.43 t/s

gemma-4 12B it UD Q2_K_XL.gguf Reading Generation 31 tokens 1.3s 24.16 t/s

gemma-4 12B it UD Q2_K_XL.gguf Reading Generation 263 tokens 11s 23.70 t/s

gemma-4 12B it UD Q2_K_XL.gguf Reading Generation 620 tokens 29s 20.70 t/s (터짐) |

2026.06.04 - [프로그램 사용/ai 프로그램] - gemma 12b, tesla t4 16GB / 1080 ti 11GB * 2

<<<< 참조용 <<<<