'잡동사니'에 해당되는 글 14208건

- 2025.07.04 피곤

- 2025.07.03 스팀 여름 할인 - 오랫만에 구매 2

- 2025.07.03 책 - 부서지는 아이들

- 2025.07.03 요금제 변경 완료

- 2025.07.03 아니 이게 머야?!? (국민카드인증 종료)

- 2025.07.02 유심도착

- 2025.07.01 ubuntu dhcp lease log

- 2025.06.30 sql delete cascade

- 2025.06.30 stm32 wwdg, iwdg 차이

- 2025.06.29 핸드폰 요금 폭탄 + usim 구매

gris는 싼데 할인이 90% 이고

데저트 오브 카락은 90%인데도 비싸다(!)

아무튼 홈월드는 원래 좋아하고, gris는 웬지 끌리고 싸니 결제!

니어 오토마타라던가는 다음 할인을 노려야지

'게임 > 오리진&스팀&유플레이' 카테고리의 다른 글

| 윈도우 11에서 JASF 실행 실패 (0) | 2025.11.02 |

|---|---|

| 스팀 단상 (0) | 2025.10.05 |

| 리눅스 스팀 proton (호환성 설정) (0) | 2025.06.28 |

| 스팀 여름 할인 시작 -_- (0) | 2025.06.27 |

| 니어 오토마타, 레플리칸트 할인 (2) | 2025.06.21 |

유튜브 보다가 발견한 책

어떻게 보면 별로 힘을 얻지못할 의사가 아닌 로스쿨 출신 저널리스트의 글.

그럼에도 요즘 현상에 대해서 꽤나 날카롭게 지적하고 있다고 생각된다.

요즘 금쪽이니 머니 말들이 많은데,

어떻게 보면 무식하지만 "병원가서 진단받기 전까진 병이 없는거야!" 라고 외치듯

정신에 대한 병도 돈내고 진단명을 받고 약을 먹으며

스스로 회복하고 극복해 나감을 잃어 버린 아이들에게 미래가 있을까? 라는 내용이다.

개인적으로 와닫는 내용은 다음과 같다.

34p. 의원병(iatrogenesis)은 이 모든 경우를 포괄하는 용어다.

104p. 그들은 진단명을 구매함으로써 아이에게 없던 부정적인 자아 인식이 생겨날 수 있다는 점을 결코 생각하지 못했다.

274p. 예민한 자녀를 두었다는 결론은 부모를 으쓱하게 만든다. 그 사실을 알아챌 만큼 충분히 예민하고 세심한 부모라는 의미이기 때문이다.

293p. 그들에게는 권위있는 아버지가 필요하거든요.

294p. 극단적 우월주의에 빨려 들어가는 젊은이 대다수는 진보 성향 가정에서 자란 이들입니다. ... 상당수가 진보 성향의 온화한 가정 출신이에요.

295p. 극단주의 단체가 부모 역할을 대체하는 셈이지요.

331p. 그녀가 만든 새 규칙 덕분에 아웃당하는 사람이 아예 생기지 않자 긴장감이 없어졌고 따라서 재미도 없어졌다. 아이들은 더 이상 핸드볼 게임을 하지 않았다. 그리고 갈등을 저희끼리 해결할 기회도 잃고 말았다.

371p. 육아 전문가는 종종 아이를 가질 결심이라는 표현을 사용한다. 마치 아이를 갖는 것이 물건을 사는 일 처럼 들린다.

애들 것과 내것 번호이동 완료

그나저나 처음으로 어떤 제휴 요금제 써보는데

밀리의 서재 제공하는것과 올리브영 5천원 쿠폰 중에 고민하다가

아내가 쓸 것 같아서 올리브영 쿠폰으로 ㄱㄱ ㅋㅋ

1호 용량이 줄어서 좀 아쉽겠군 ㅋㅋ

2호 이제 쓰는 법을 알려줘야 하니 좀 넉넉하게 (실은 가장 싼 요금제라서)

나 이제 10기가를 넘어 17기가다 우헤헤

'개소리 왈왈 > 모바일 생활' 카테고리의 다른 글

| 요금제 변경 시도 (0) | 2026.01.28 |

|---|---|

| 흑우 음뭬~ (0) | 2025.11.09 |

| 아니 이게 머야?!? (국민카드인증 종료) (0) | 2025.07.03 |

| 핸드폰 요금 폭탄 + usim 구매 (0) | 2025.06.29 |

| 별 거 아닌데 수상하네 (2) | 2025.05.22 |

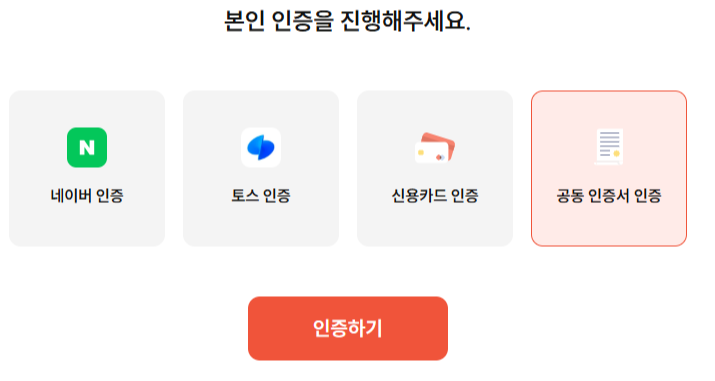

엥 국민카드 인증 왜 사라졌어?!?!

그 와중에(?) 롯데카드도 다음달 부터 종료

기사를 찾아보니 결국은 8년 유지했는데 돈되는 서비스도 아니고 종료 라는 느낌

'개소리 왈왈 > 모바일 생활' 카테고리의 다른 글

| 흑우 음뭬~ (0) | 2025.11.09 |

|---|---|

| 요금제 변경 완료 (0) | 2025.07.03 |

| 핸드폰 요금 폭탄 + usim 구매 (0) | 2025.06.29 |

| 별 거 아닌데 수상하네 (2) | 2025.05.22 |

| 다이소 블루투스 리시버 (0) | 2025.02.17 |

gpt 가라사대

| $ cat /var/lib/dhcp/dhclient.leases |

dhclient를 실행하고 나서 생성되는 로그인듯 하다.

아무튼 어쩌다 보니(?) 유선은 문제 없어 보이는데 무선의 경우 dhcp 대역이 이상해서 확인해보니

dhcp 서버가 예상한 192.168.10.1이 아닌 10.75로 설정되어있는것을 확인

아.. dhcp 서버가 두개 있어서 이놈들이 헤까닥 했구나..

| lease { interface "wlo1"; fixed-address 192.168.10.163; option subnet-mask 255.255.0.0; option routers 192.168.10.1; option dhcp-lease-time 86400; option dhcp-message-type 5; option domain-name-servers 192.168.10.1; option dhcp-server-identifier 192.168.10.75; option host-name "minimonk-notebook"; option domain-name "vyos.net"; renew 2 2025/07/01 12:34:49; rebind 3 2025/07/02 00:07:12; expire 3 2025/07/02 03:07:12; } |

+

| sudo dhclient wlo1 |

'Linux > Ubuntu' 카테고리의 다른 글

| intel dri 3? (0) | 2025.08.12 |

|---|---|

| csvtool (0) | 2025.07.11 |

| 우분투에서 스타크래프트 시도.. 실패 (0) | 2025.06.28 |

| netplan (0) | 2025.03.06 |

| ubuntu 24.04 네트워크 연결 중 문제 (0) | 2025.02.26 |

연쇄적으로 지워지게 하려면 cascade로 DDL 사용시 넣어주면 된다.

[링크 : https://sweeteuna.tistory.com/m/71]

| ALTER TABLE PLAYER DROP CONSTRAINT CONSTRAINT_8CD; ALTER TABLE PLAYER ADD FOREIGN KEY (team_id) REFERENCES TEAM (id) ON UPDATE CASCADE; ALTER TABLE PLAYER DROP CONSTRAINT CONSTRAINT_8CD; ALTER TABLE PLAYER ADD FOREIGN KEY (team_id) REFERENCES TEAM (id) ON DELETE CASCADE; |

[링크 : https://papimon.tistory.com/90]

'Programming > 데이터베이스' 카테고리의 다른 글

| 데이터베이스 순환참조 (0) | 2017.05.20 |

|---|---|

| 데이터베이스 1:n 관계 구현 (0) | 2016.02.29 |

| CRUD (0) | 2014.05.17 |

| 데이터베이스 - 키 관련 (0) | 2014.04.28 |

| 카티젼 프로덕트, join (0) | 2014.04.26 |

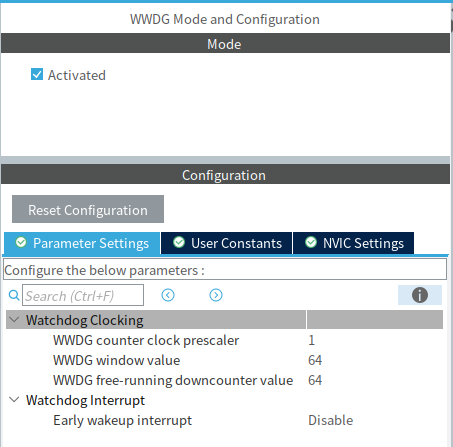

iwdg는 내부 rc 클럭으로 작동을 하는 부분으로 stop이나 standby 모드에서도 작동한다.

즉, 절전모드 하려면 iwdg는 조금은 더 주의 깊게 설정해야 한다는 의미인데...

wwdg 는 window 라서 특정 시간대에만 와치독을 리셋할 수 있게 한다.

너무 빠르게 리셋해도 전체 시스템이 리셋되고, 너무 늦어도 리셋된다.

작동 시간이 일정하다면 wwdg를 쓰면 될 것 같기한데...

| Independent watchdog The independent watchdog is based on a 12-bit downcounter and 8-bit prescaler. It is clocked from an independent 40 kHz internal RC and as it operates independently of the main clock, it can operate in Stop and Standby modes. It can be used either as a watchdog to reset the device when a problem occurs, or as a free-running timer for application timeout management. It is hardware- or software-configurable through the option bytes. The counter can be frozen in debug mode. Window watchdog The window watchdog is based on a 7-bit downcounter that can be set as free-running. It can be used as a watchdog to reset the device when a problem occurs. It is clocked from the main clock. It has an early warning interrupt capability and the counter can be frozen in debug mode. |

[링크 : https://www.st.com/resource/en/datasheet/stm32f103c8.pdf]

|

|

[링크 : https://pineenergy.tistory.com/138]

[링크 : https://community.st.com/t5/stm32-mcus-products/iwdg-vs-wwdg/td-p/451732]

'embeded > Cortex-M3 STM' 카테고리의 다른 글

| HAL_FLASH_Program (0) | 2025.07.21 |

|---|---|

| stm32cubeide build analyzer (0) | 2025.07.21 |

| stm32f wwdg iwdg 그리고 stop mode (0) | 2025.06.27 |

| stm32 cubeide ioc gen (0) | 2025.06.18 |

| stm32 uart tx dma (0) | 2025.06.18 |

LG는 이래저래 싫은데

그렇다고 SK 가기도 싫고 참 어렵다.. -_-

아무튼 SK / KT 유심 각각 2개 구매하고

다음달에 바로 요금제 변경 ㄱㄱ 해야겠다.

'개소리 왈왈 > 모바일 생활' 카테고리의 다른 글

| 요금제 변경 완료 (0) | 2025.07.03 |

|---|---|

| 아니 이게 머야?!? (국민카드인증 종료) (0) | 2025.07.03 |

| 별 거 아닌데 수상하네 (2) | 2025.05.22 |

| 다이소 블루투스 리시버 (0) | 2025.02.17 |

| 삼성 dex 4k로 출력하기 (6) | 2025.02.03 |