SD 모델에서 portrait ot usada pekora 라고 긍정 프롬프트 에서 생성하니 이상한 아저씨가 나온다.

몇번의 삽질하다 안되서 역시 gpt님의 도움으로(!) 어찌어찌 한걸음?

일단 checkpoint는 SDXL이 아닌 SD로 해주어야 한다.

그러니 만만(?)한 오리지널 파일로 선택

그리고는 embedding과

hyper network를 일단 생성해 본다.

Train 으로 가서 embedding 과 hypernetwork를 일단 선택해주고

|

|

파일을 받아서

imagemagick을 설치하고 아래의 명령으로 대 충~ 변환해준다.

| mkdir -p dataset mogrify \ -path dataset \ -format jpg \ -resize 512x512^ \ -gravity center \ -crop 512x512+0+0 \ +repage \ -quality 95 \ *.png *.bmp *.webp *.tif |

그리고는 Dataset directory에 붙여넣고

일단 먼지 모르겠으니 Train Embedding을 누르니

무시무시한 시간이 뜨면서 먼가 진행이 된다.

아래의 메시지 처럼 loss와 step이 증가되는데

잘 보니까 아까 변환했던 파일명이 포함되서 나오는 듯?

| Loss: 0.1782198 Step: 394 Last prompt: a dirty painting of i1683731685, art by portrait of usada pekora Last saved embedding: <none> Last saved image: <none> Loss: 0.0547138 Step: 395 Last prompt: a painting of _1_1756696475_w360, art by portrait of usada pekora Last saved embedding: <none> Last saved image: <none> Loss: 0.0913988 Step: 397 Last prompt: a dark painting of hPSbz8dGC0Kzj_e0C25DrQ1IkVSk1fKm3shxQYY_pNi-qD6BnEUCNIuEtYe1a1ri6IHTBFJ7gRuuSrE34KdmxQ, art by portrait of usada pekora Last saved embedding: <none> Last saved image: <none> Loss: 0.0651186 Step: 398 Last prompt: a rendering of ZpuilhK8qsxjuj_Z3nPoINJNXYOYKZv72wVg-943xSclKQ_xs_qC1bJJM32gGWUuJBNEBaMaAQJQzxaJ7txuHg, art by portrait of usada pekora Last saved embedding: <none> Last saved image: <none> Loss: 0.0029331 Step: 499 Last prompt: a nice painting of DhB_-h44CoXqInpAmvHWVzqKGNprsXK6bjNO0nCG0waQX72PREOmRxL9tBvqKFcuxnZULq3L3vFskLcRSeNQvA, art by portrait of usada pekora Last saved embedding: <none> Last saved image: <none> |

콘솔창 열어보면 먼가 되는거 같긴한데..

gpu도 열심히 갈려 나가는 중. 으어어 온도 괜찮을까?

처음으로 gpu fan 60% 돌파!

cpu가 아주 노는건 아니고, 파일 퍼준다고 조금 사용되는 것 같다.

좀 돌다 보니 이런게 막 나온다.

일종의 evaluation 단계에서 하나 빼보는건가?

아마 학습 단계에서 500개마다 save an image 되어있는 부분이, 이렇게 이미지로 나오는 듯

|

|

|

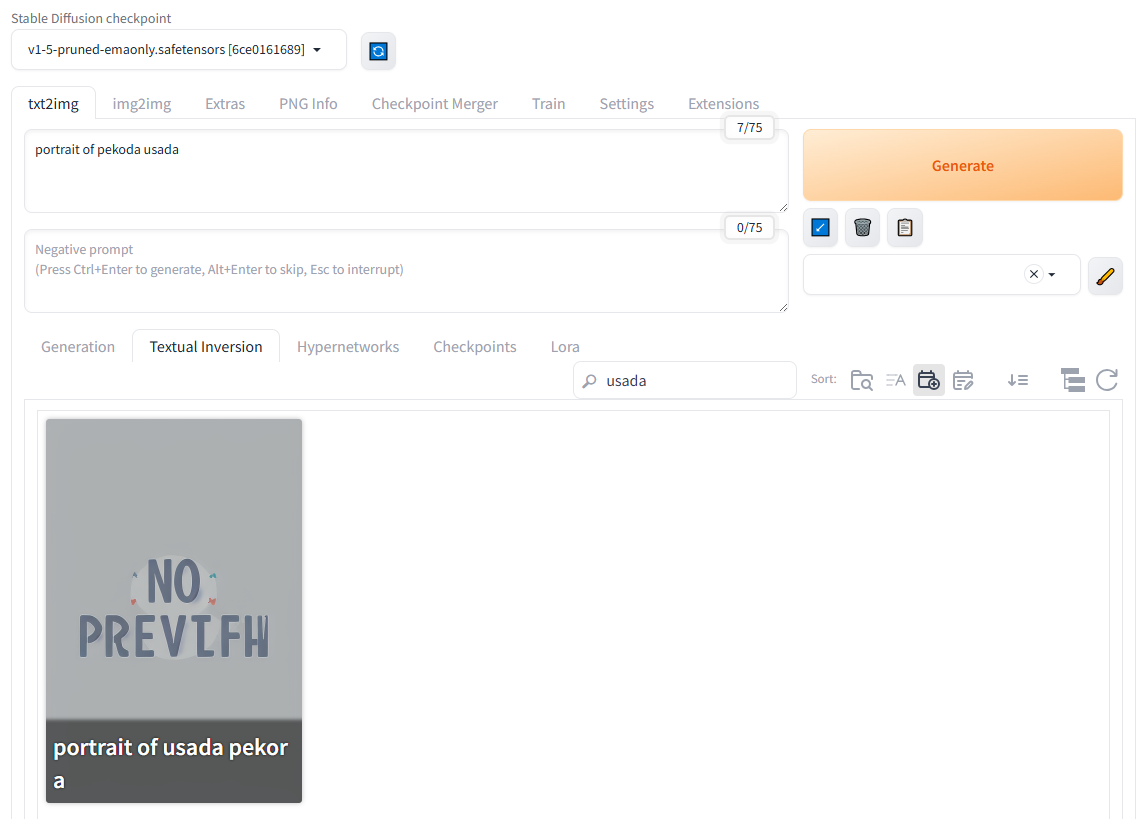

학습 돌리고 나서 usada가 들어간걸 찾아보는데

safetensors는 없고 embeddings와 model/hypernetworks 에 pt 확장자로 존재한다.

| /mnt/Downloads/stable-diffusion-webui$ find ./ -name *usada* ./embeddings/portrait of usada pekora.pt ./textual_inversion/2026-06-10/portrait of usada pekora ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-1500.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-3500.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-500.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-3000.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-2500.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-2000.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-1000.png ./textual_inversion/2026-06-10/portrait of usada pekora/images/portrait of usada pekora-4000.png ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-500.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-3500.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-4000.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-3000.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-1000.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-2500.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-2000.pt ./textual_inversion/2026-06-10/portrait of usada pekora/embeddings/portrait of usada pekora-1500.pt ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-1500.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-3500.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-500.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-3000.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-2500.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-2000.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-1000.png ./textual_inversion/2026-06-10/portrait of usada pekora/image_embeddings/portrait of usada pekora-4000.png ./models/hypernetworks/portrait of usada pekora.pt |

| 26K 6월 11 00:24 'portrait of usada pekora.pt' 84M 6월 10 23:12 'portrait of usada pekora.pt' 26K 6월 10 23:38 'portrait of usada pekora-1000.pt' 26K 6월 10 23:45 'portrait of usada pekora-1500.pt' 26K 6월 10 23:53 'portrait of usada pekora-2000.pt' 26K 6월 11 00:01 'portrait of usada pekora-2500.pt' 26K 6월 11 00:08 'portrait of usada pekora-3000.pt' 26K 6월 11 00:16 'portrait of usada pekora-3500.pt' 26K 6월 11 00:24 'portrait of usada pekora-4000.pt' 26K 6월 10 23:30 'portrait of usada pekora-500.pt' |

txt2img 에서 refresh 해보니 아래와 같이 뜬다.

그냥 자동으로 추가되고, generate 누르니 아래와 같이 생성된다.

그나저나 저 이미지는 "portrait of usada pekora" 가 아니면 비슷하게라도 그려지지 않는거 보면

전체 키워드가 들어가야 하는건가?

[링크 : https://github.com/AUTOMATIC1111/stable-diffusion-webui/wiki/Textual-Inversion]

'프로그램 사용 > ai 프로그램' 카테고리의 다른 글

| curl로 llama-swap 에게 api로 요청하기 (0) | 2026.06.10 |

|---|---|

| llama.cpp prompt 옵션 (0) | 2026.06.10 |

| exllama (0) | 2026.06.10 |

| stable diffusion train (0) | 2026.06.10 |

| gemma4-e4b it qat / gemma4-12b mtp on 1080 ti 11GB (0) | 2026.06.08 |