vulkan은 단일 그래픽일떄는 잘 도는데 두 개일때는 영~ 안되는 느낌이다.

먼가 장렬히 죽어버리는데

gpt에 물어봐도 뜬구름 잡는 소리만 하고 있고

일단 wait4.c 가 없다는건 중요한게 아니라고 하는데 디버그 심볼을 설치해 줘도 뜨는 건 매한가지

| ~/Downloads/llama-b8902/model$ ../llama-cli -m ./gemma-4-E2B-it-Q4_K_M.gguf load_backend: loaded RPC backend from /home/minimonk/Downloads/llama-b8902/libggml-rpc.so load_backend: loaded Vulkan backend from /home/minimonk/Downloads/llama-b8902/libggml-vulkan.so load_backend: loaded CPU backend from /home/minimonk/Downloads/llama-b8902/libggml-cpu-haswell.so Loading model... //home/runner/work/llama.cpp/llama.cpp/ggml/src/ggml-backend.cpp:1367: GGML_ASSERT(n_inputs < GGML_SCHED_MAX_SPLIT_INPUTS) failed -[New LWP 3559] [New LWP 3560] [New LWP 3561] [New LWP 3563] [New LWP 3564] [New LWP 3565] [New LWP 3568] [New LWP 3570] [New LWP 3571] [New LWP 3574] [Thread debugging using libthread_db enabled] Using host libthread_db library "/lib/x86_64-linux-gnu/libthread_db.so.1". 0x00007ed9e18ea42f in __GI___wait4 (pid=3575, stat_loc=0x0, options=0, usage=0x0) at ../sysdeps/unix/sysv/linux/wait4.c:30 30 ../sysdeps/unix/sysv/linux/wait4.c: No such file or directory. #0 0x00007ed9e18ea42f in __GI___wait4 (pid=3575, stat_loc=0x0, options=0, usage=0x0) at ../sysdeps/unix/sysv/linux/wait4.c:30 30 in ../sysdeps/unix/sysv/linux/wait4.c #1 0x00007ed9e1f5b71b in ggml_print_backtrace () from /home/minimonk/Downloads/llama-b8902/libggml-base.so.0 #2 0x00007ed9e1f5b8b2 in ggml_abort () from /home/minimonk/Downloads/llama-b8902/libggml-base.so.0 #3 0x00007ed9e1f77f2d in ggml_backend_sched_split_graph () from /home/minimonk/Downloads/llama-b8902/libggml-base.so.0 #4 0x00007ed9e1f780b5 in ggml_backend_sched_reserve_size () from /home/minimonk/Downloads/llama-b8902/libggml-base.so.0 #5 0x00007ed9e20b0fa4 in llama_context::graph_reserve(unsigned int, unsigned int, unsigned int, llama_memory_context_i const*, bool, unsigned long*) () from /home/minimonk/Downloads/llama-b8902/libllama.so.0 #6 0x00007ed9e20b1adf in llama_context::sched_reserve() () from /home/minimonk/Downloads/llama-b8902/libllama.so.0 #7 0x00007ed9e20b5869 in llama_context::llama_context(llama_model const&, llama_context_params) () from /home/minimonk/Downloads/llama-b8902/libllama.so.0 #8 0x00007ed9e20b649b in llama_init_from_model () from /home/minimonk/Downloads/llama-b8902/libllama.so.0 #9 0x00007ed9e26282a2 in common_get_device_memory_data(char const*, llama_model_params const*, llama_context_params const*, std::vector<ggml_backend_device*, std::allocator<ggml_backend_device*> >&, unsigned int&, unsigned int&, unsigned int&, ggml_log_level) () from /home/minimonk/Downloads/llama-b8902/libllama-common.so.0 #10 0x00007ed9e2628f52 in common_params_fit_impl(char const*, llama_model_params*, llama_context_params*, float*, llama_model_tensor_buft_override*, unsigned long*, unsigned int, ggml_log_level) () from /home/minimonk/Downloads/llama-b8902/libllama-common.so.0 #11 0x00007ed9e262c9e2 in common_fit_params(char const*, llama_model_params*, llama_context_params*, float*, llama_model_tensor_buft_override*, unsigned long*, unsigned int, ggml_log_level) () from /home/minimonk/Downloads/llama-b8902/libllama-common.so.0 #12 0x00007ed9e25fe2ff in common_init_result::common_init_result(common_params&) () from /home/minimonk/Downloads/llama-b8902/libllama-common.so.0 #13 0x00007ed9e25ff9a8 in common_init_from_params(common_params&) () from /home/minimonk/Downloads/llama-b8902/libllama-common.so.0 #14 0x00006547aab18d7e in server_context_impl::load_model(common_params&) () #15 0x00006547aaa549b5 in main () \[Inferior 1 (process 3556) detached] Aborted (core dumped) |

-sm none

옵션을 주면 0 번 gpu를 가지고 도는데 -v 옵션을 주고 보니

메모리 할당이 0MB? 엥?

| load_tensors: layer 0 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 1 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 2 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 3 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 4 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 5 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 6 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 7 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 8 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 9 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 10 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 11 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 12 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 13 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 14 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 15 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 16 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 17 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 18 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 19 assigned to device Vulkan1, is_swa = 0 load_tensors: layer 20 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 21 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 22 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 23 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 24 assigned to device Vulkan1, is_swa = 0 load_tensors: layer 25 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 26 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 27 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 28 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 29 assigned to device Vulkan1, is_swa = 0 load_tensors: layer 30 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 31 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 32 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 33 assigned to device Vulkan1, is_swa = 1 load_tensors: layer 34 assigned to device Vulkan1, is_swa = 0 load_tensors: layer 35 assigned to device Vulkan1, is_swa = 0 load_tensors: offloading output layer to GPU load_tensors: offloading 34 repeating layers to GPU load_tensors: offloaded 36/36 layers to GPU load_tensors: Vulkan0 model buffer size = 0.00 MiB load_tensors: Vulkan1 model buffer size = 0.00 MiB load_tensors: Vulkan_Host model buffer size = 0.00 MiB llama_context: constructing llama_context |

load_tensors: layer 0 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 1 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 2 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 3 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 4 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 5 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 6 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 7 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 8 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 9 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 10 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 11 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 12 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 13 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 14 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 15 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 16 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 17 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 18 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 19 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 20 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 21 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 22 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 23 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 24 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 25 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 26 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 27 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 28 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 29 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 30 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 31 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 32 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 33 assigned to device Vulkan0, is_swa = 1 load_tensors: layer 34 assigned to device Vulkan0, is_swa = 0 load_tensors: layer 35 assigned to device Vulkan0, is_swa = 0 load_tensors: offloading output layer to GPU load_tensors: offloading 34 repeating layers to GPU load_tensors: offloaded 36/36 layers to GPU load_tensors: CPU_Mapped model buffer size = 1756.00 MiB load_tensors: Vulkan0 model buffer size = 1407.73 MiB .|.../.........-..........\..........|... common_init_result: added <eos> logit bias = -inf common_init_result: added <|tool_response> logit bias = -inf common_init_result: added <turn|> logit bias = -inf llama_context: constructing llama_context |

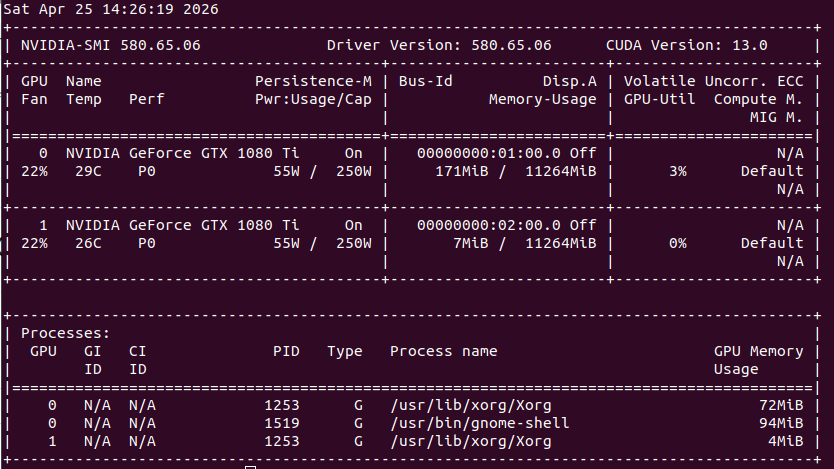

vulkan 에서 사용할 그래픽 카드를 1개(0번 1번), 2개로 해봤더니

먼가.. vulkan 으로 메모리 할당을 못하고 죽는 느낌.

| $ GGML_VK_VISIBLE_DEVICES=0 ./llama-cli -m ../model/gemma-4-E2B-it-Q4_K_M.gguf -v | llama_model_load_from_file_impl: using device Vulkan0 (NVIDIA GeForce GTX 1080 Ti) (0000:01:00.0) - 10985 MiB free load_tensors: offloading output layer to GPU load_tensors: offloading 34 repeating layers to GPU load_tensors: offloaded 36/36 layers to GPU load_tensors: Vulkan0 model buffer size = 0.00 MiB load_tensors: Vulkan_Host model buffer size = 0.00 MiB llama_context: enumerating backends llama_context: backend_ptrs.size() = 2 sched_reserve: reserving ... sched_reserve: max_nodes = 4816 sched_reserve: reserving full memory module sched_reserve: worst-case: n_tokens = 512, n_seqs = 1, n_outputs = 1 graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 sched_reserve: Flash Attention was auto, set to enabled sched_reserve: resolving fused Gated Delta Net support: graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 sched_reserve: fused Gated Delta Net (autoregressive) enabled graph_reserve: reserving a graph for ubatch with n_tokens = 16, n_seqs = 1, n_outputs = 16 sched_reserve: fused Gated Delta Net (chunked) enabled graph_reserve: reserving a graph for ubatch with n_tokens = 512, n_seqs = 1, n_outputs = 512 graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 graph_reserve: reserving a graph for ubatch with n_tokens = 512, n_seqs = 1, n_outputs = 512 sched_reserve: Vulkan0 compute buffer size = 515.00 MiB sched_reserve: Vulkan_Host compute buffer size = 281.52 MiB sched_reserve: graph nodes = 1500 sched_reserve: graph splits = 2 sched_reserve: reserve took 13.68 ms, sched copies = 1 common_memory_breakdown_print: | memory breakdown [MiB] | total free self model context compute unaccounted | common_memory_breakdown_print: | - Vulkan0 (GTX 1080 Ti) | 11510 = 10975 + (2702 = 1407 + 780 + 515) + 17592186042248 | common_memory_breakdown_print: | - Host | 2037 = 1756 + 0 + 281 | |

| $ GGML_VK_VISIBLE_DEVICES=1 ./llama-cli -m ../model/gemma-4-E2B-it-Q4_K_M.gguf -v | llama_model_load_from_file_impl: using device Vulkan0 (NVIDIA GeForce GTX 1080 Ti) (0000:02:00.0) - 11393 MiB free load_tensors: offloading output layer to GPU load_tensors: offloading 34 repeating layers to GPU load_tensors: offloaded 36/36 layers to GPU load_tensors: Vulkan0 model buffer size = 0.00 MiB load_tensors: Vulkan_Host model buffer size = 0.00 MiB llama_context: enumerating backends llama_context: backend_ptrs.size() = 2 sched_reserve: reserving ... sched_reserve: max_nodes = 4816 sched_reserve: reserving full memory module sched_reserve: worst-case: n_tokens = 512, n_seqs = 1, n_outputs = 1 graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 sched_reserve: Flash Attention was auto, set to enabled sched_reserve: resolving fused Gated Delta Net support: graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 sched_reserve: fused Gated Delta Net (autoregressive) enabled graph_reserve: reserving a graph for ubatch with n_tokens = 16, n_seqs = 1, n_outputs = 16 sched_reserve: fused Gated Delta Net (chunked) enabled graph_reserve: reserving a graph for ubatch with n_tokens = 512, n_seqs = 1, n_outputs = 512 graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 graph_reserve: reserving a graph for ubatch with n_tokens = 512, n_seqs = 1, n_outputs = 512 sched_reserve: Vulkan0 compute buffer size = 515.00 MiB sched_reserve: Vulkan_Host compute buffer size = 281.52 MiB sched_reserve: graph nodes = 1500 sched_reserve: graph splits = 2 sched_reserve: reserve took 12.95 ms, sched copies = 1 common_memory_breakdown_print: | memory breakdown [MiB] | total free self model context compute unaccounted | common_memory_breakdown_print: | - Vulkan0 (GTX 1080 Ti) | 11510 = 11382 + (2702 = 1407 + 780 + 515) + 17592186041840 | common_memory_breakdown_print: | - Host | 2037 = 1756 + 0 + 281 | |

| $ GGML_VK_VISIBLE_DEVICES=0,1 ./llama-cli -m ../model/gemma-4-E2B-it-Q4_K_M.gguf -v | llama_model_load_from_file_impl: using device Vulkan0 (NVIDIA GeForce GTX 1080 Ti) (0000:01:00.0) - 11009 MiB free llama_model_load_from_file_impl: using device Vulkan1 (NVIDIA GeForce GTX 1080 Ti) (0000:02:00.0) - 11392 MiB free load_tensors: offloading output layer to GPU load_tensors: offloading 34 repeating layers to GPU load_tensors: offloaded 36/36 layers to GPU load_tensors: Vulkan0 model buffer size = 0.00 MiB load_tensors: Vulkan1 model buffer size = 0.00 MiB load_tensors: Vulkan_Host model buffer size = 0.00 MiB llama_context: enumerating backends llama_context: backend_ptrs.size() = 3 llama_context: pipeline parallelism enabled sched_reserve: reserving ... sched_reserve: max_nodes = 4824 sched_reserve: reserving full memory module sched_reserve: worst-case: n_tokens = 512, n_seqs = 1, n_outputs = 1 graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 sched_reserve: Flash Attention was auto, set to enabled sched_reserve: resolving fused Gated Delta Net support: graph_reserve: reserving a graph for ubatch with n_tokens = 1, n_seqs = 1, n_outputs = 1 sched_reserve: fused Gated Delta Net (autoregressive) enabled graph_reserve: reserving a graph for ubatch with n_tokens = 16, n_seqs = 1, n_outputs = 16 sched_reserve: fused Gated Delta Net (chunked) enabled graph_reserve: reserving a graph for ubatch with n_tokens = 512, n_seqs = 1, n_outputs = 512 /home/runner/work/llama.cpp/llama.cpp/ggml/src/ggml-backend.cpp:1367: GGML_ASSERT(n_inputs < GGML_SCHED_MAX_SPLIT_INPUTS) failed [New LWP 12120] [New LWP 12121] [New LWP 12122] [New LWP 12124] [New LWP 12125] [New LWP 12126] [New LWP 12129] [New LWP 12131] [New LWP 12132] [New LWP 12135] [Thread debugging using libthread_db enabled] Using host libthread_db library "/lib/x86_64-linux-gnu/libthread_db.so.1". 0x0000743ccfeea42f in __GI___wait4 (pid=12136, stat_loc=0x0, options=0, usage=0x0) at ../sysdeps/unix/sysv/linux/wait4.c:30 30 ../sysdeps/unix/sysv/linux/wait4.c: No such file or directory. |

'프로그램 사용 > ai 프로그램' 카테고리의 다른 글

| llama.cpp windows cuda12 1080 ti 11GB * 2 테스트 (0) | 2026.04.25 |

|---|---|

| llama.cpp build / cuda compute capability (0) | 2026.04.25 |

| llm transformer (0) | 2026.04.25 |

| nvidia-smi를 통한 소비전력 제한과 토큰 생성속도 (1) | 2026.04.24 |

| llama-server web ui (0) | 2026.04.24 |